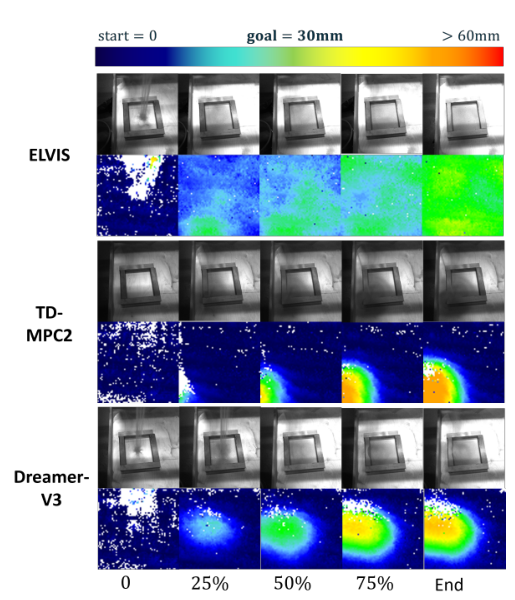

RSSM belief for occluded visual control

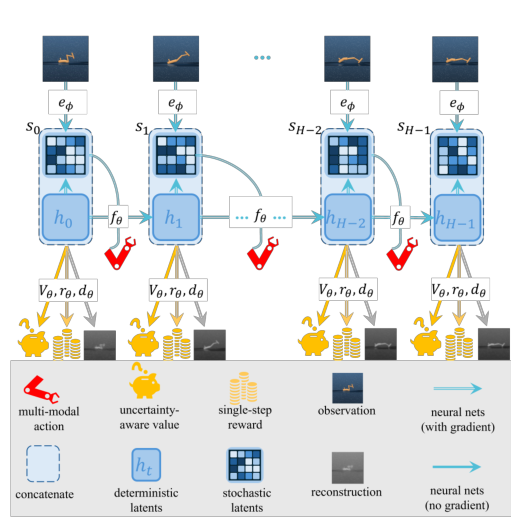

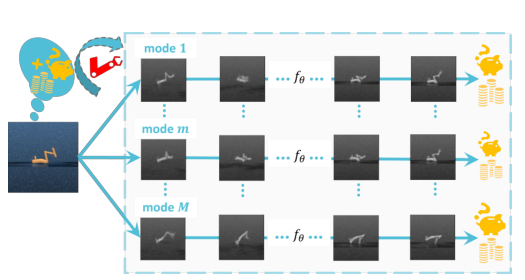

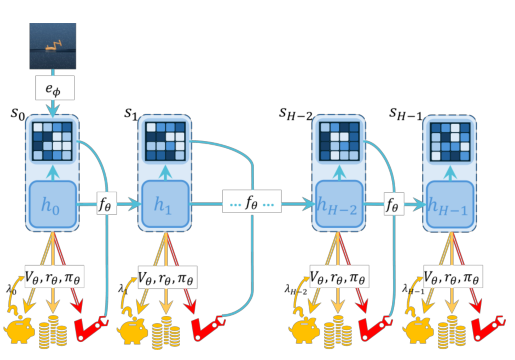

The encoder infers stochastic latents from observations while a recurrent deterministic state carries memory forward. Future planning uses prior latent transitions when observations are unavailable or unreliable.